MCP Explained: The Universal Protocol for AI Tools

What is Model Context Protocol? Watch MCP client-server communication in action. Learn how this Anthropic standard connects LLMs to databases, APIs, and tools.

Loading...

Transforming complex Python concepts into simple, actionable knowledge. Master Python, AI/ML, and ace your coding interviews.

Calculators and playgrounds for Python developers and ML engineers

Learn how knowledge distillation trains small student models from large teachers. Tune temperature and watch soft labels in the interactive playground.

Prompt engineering hit a ceiling. Context engineering - controlling what goes into the model, not just how you ask - is the real skill for 2026 AI.

Learn how LoRA rank, alpha, and target modules control fine-tuning quality. Interactive playground lets you tune each parameter and see the impact live.

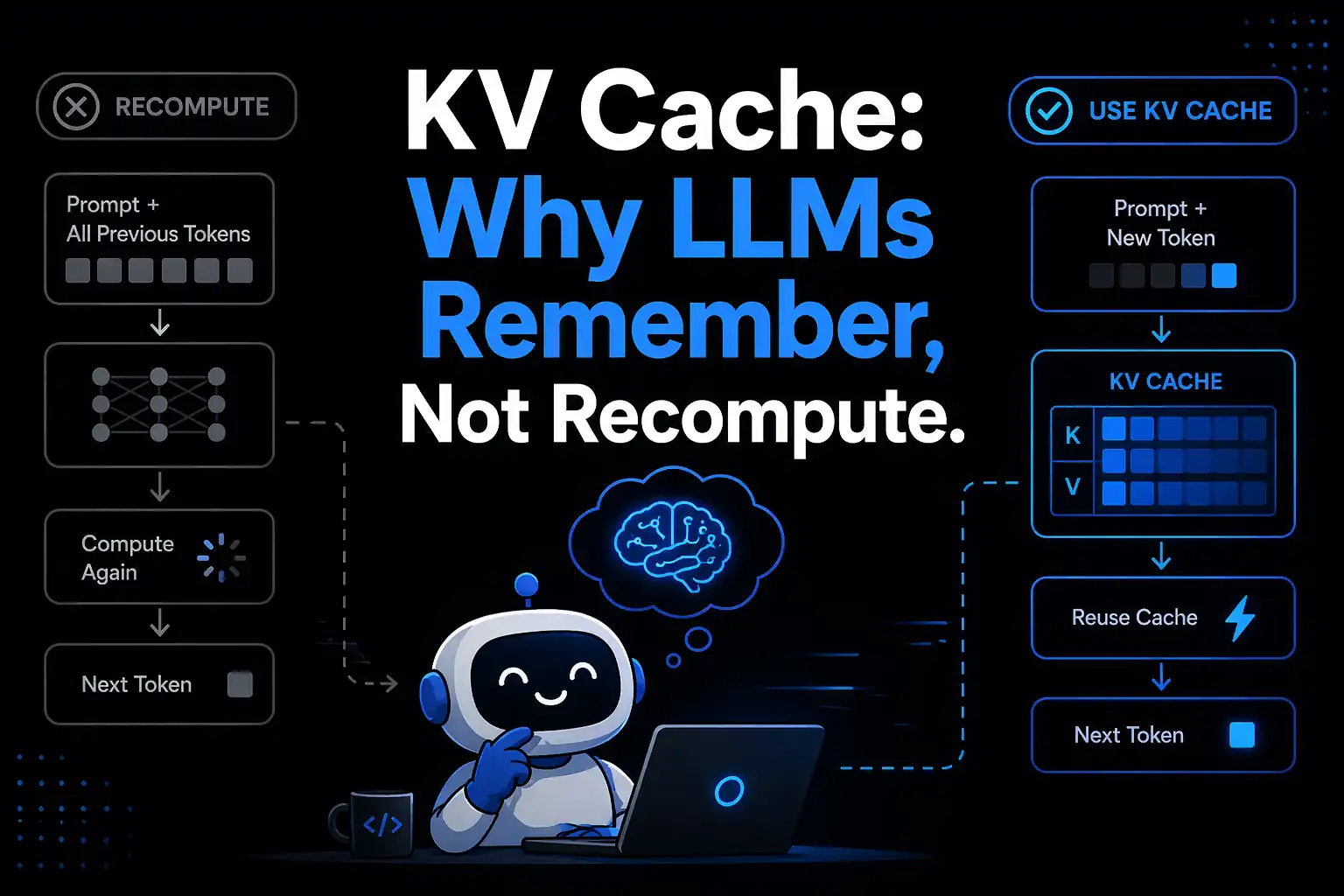

Learn how the key-value cache makes LLM inference fast by remembering past attention computations. Interactive simulator shows the speedup token by token.

Flash Attention fixes the memory bottleneck that wastes most of your GPU's power during attention. How tiling works and why every LLM uses it.

Learn how quantization compresses LLMs from 140 GB to 35 GB with minimal accuracy loss. What it is, how it works, and when to use INT8 vs INT4.